Description:

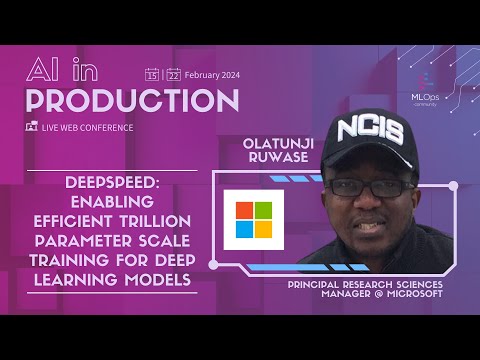

Explore the challenges and solutions for efficient trillion-parameter scale training in deep learning models in this conference talk from the AI in Production Conference. Delve into DeepSpeed, a deep learning optimization library designed to make distributed model training and inference efficient, effective, and easy on commodity hardware. Learn about training optimizations that improve memory, compute, and data efficiency for extreme model scaling. Gain insights from Olatunji (Tunji) Ruwase, co-founder and lead of the DeepSpeed project at Microsoft, as he shares his expertise in building systems convergence optimizations and frameworks for distributed training and inference of deep learning models.

Enabling Efficient Trillion Parameter Scale Training for Deep Learning Models

Add to list