Description:

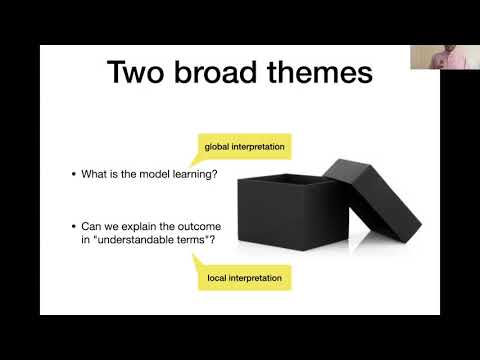

Explore model interpretation in neural networks for natural language processing through this comprehensive lecture from CMU's CS 11-747 course. Delve into the importance and definition of interpretability, examining two broad themes in the field. Investigate source syntax in neural machine translation and discover why neural translations achieve appropriate lengths. Analyze sentence embeddings in-depth, including probing techniques and their limitations. Learn about Minimum Description Length (MDL) Probes and evaluation methods. Examine explanation techniques such as gradient-based importance scores and extractive rationale generation. Gain valuable insights into the inner workings of neural models for NLP applications.

CMU Neural Nets for NLP: Model Interpretation

Add to list

#Computer Science

#Artificial Intelligence

#Neural Networks

#Natural Language Processing (NLP)

#Machine Learning

#Interpretability

#Sentence Embedding