Description:

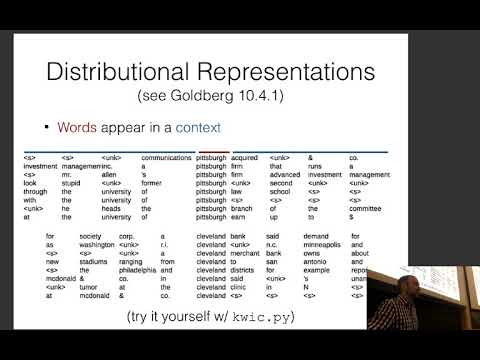

Explore word vectors in natural language processing through this comprehensive lecture from CMU's Neural Networks for NLP course. Dive into techniques for describing words by their context, including counting and prediction methods like skip-grams and CBOW. Learn how to evaluate and visualize word vectors, and discover advanced methods for creating more nuanced word representations. Examine the limitations of traditional embeddings and explore solutions like sub-word and multi-prototype embeddings. Gain insights into both intrinsic and extrinsic evaluation methods for word embeddings, and understand their practical applications in NLP systems.

Neural Nets for NLP 2019 - Word Vectors

Add to list

#Computer Science

#Artificial Intelligence

#Neural Networks

#Natural Language Processing (NLP)

#Machine Learning

#Word Embeddings

#Word Vectors