Description:

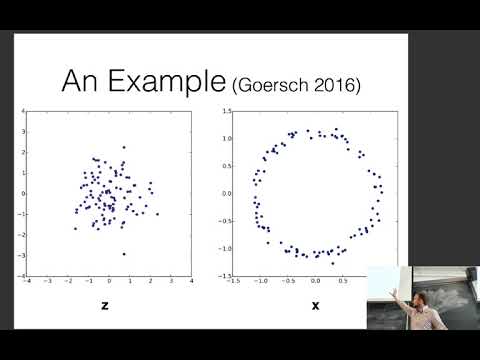

Explore models with latent random variables in neural networks for natural language processing through this comprehensive lecture. Delve into the differences between discriminative and generative models, understanding the importance of latent random variables in NLP tasks. Learn about the Variational Autoencoder (VAE) objective, its interpretation, and solutions to sampling issues. Discover techniques for generating language models with latent variables, including KL divergence annealing and weakening the decoder. Examine methods for handling discrete latent variables, such as enumeration and the Gumbel-Softmax technique. Investigate practical applications in controllable text generation and symbol sequence latent variables. Gain insights into the challenges and solutions in training these models, equipping yourself with advanced knowledge in neural network approaches for NLP.

CMU Neural Nets for NLP - Models with Latent Random Variables

Add to list

#Computer Science

#Artificial Intelligence

#Neural Networks

#Natural Language Processing (NLP)

#Machine Learning

#Language Models

#Generative AI

#Generative Models

#KL Divergence