Description:

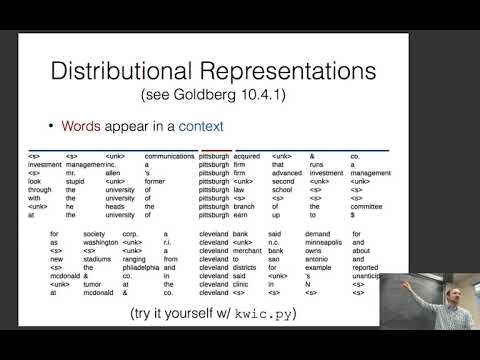

Explore the fundamentals of word representations in natural language processing through this comprehensive lecture from Carnegie Mellon University's Neural Networks for NLP course. Delve into various approaches for modeling words, from manual attempts like WordNet to modern word embedding techniques. Examine the differences between distributional and distributed representations, and learn about count-based and prediction-based methods for training word embeddings. Investigate the importance of context in word representations and discover different evaluation techniques for assessing embedding quality. Analyze the strengths and limitations of word embeddings, and gain insights into choosing appropriate embeddings for specific tasks. Conclude by exploring sub-word embedding techniques to address limitations of traditional word-level representations.

CMU Neural Nets for NLP 2018 - Models of Words

Add to list

#Computer Science

#Artificial Intelligence

#Neural Networks

#Natural Language Processing (NLP)

#Machine Learning

#Word Embeddings