Description:

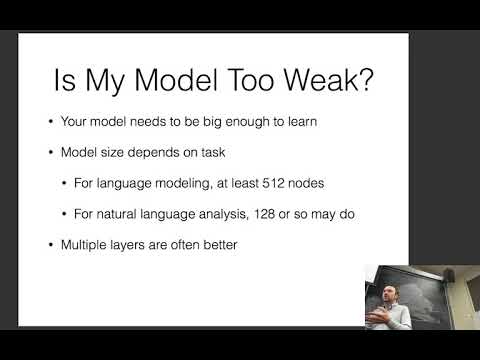

Explore debugging techniques for neural networks in natural language processing during this lecture from CMU's Neural Networks for NLP course. Learn to diagnose problems, address training and decoding time issues, combat overfitting, and handle disconnects between loss and evaluation. Gain insights into model sizing, optimization challenges, initialization strategies, and the impact of data sorting on performance. Discover effective approaches for beam search debugging and implementing dev-driven learning rate decay to enhance your NLP models.

Debugging Neural Nets for NLP

Add to list

#Computer Science

#Artificial Intelligence

#Neural Networks

#Natural Language Processing (NLP)

#Machine Learning

#Overfitting

#Model Optimization