Description:

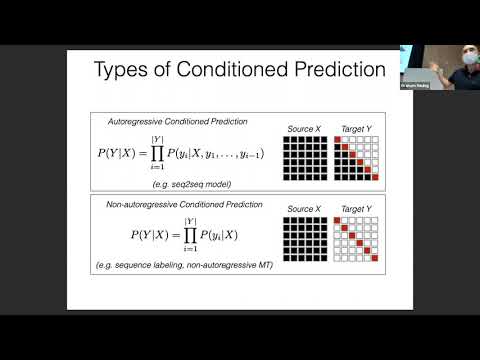

Explore recurrent neural networks in this advanced natural language processing lecture from Carnegie Mellon University. Delve into the intricacies of long-distance dependencies, the Winigrad Schema Challenge, and various types of predictions. Examine the structure and functionality of recurrent networks, addressing the vanishing gradient problem and introducing Long Short-Term Memory (LSTM) networks. Analyze the strengths and weaknesses of recurrence in sentence modeling, and discover the potential of pre-training techniques for RNNs. Gain insights into efficiency considerations and optimization strategies for these powerful deep learning models.

CMU Advanced NLP: Recurrent Neural Networks

Add to list

#Computer Science

#Artificial Intelligence

#Natural Language Processing (NLP)

#Neural Networks

#Recurrent Neural Networks (RNN)

#Long short-term memory (LSTM)